Once again, with nothing in particular planned for a post, the internet has provided me with a hot topic that I both care about and know something about - and therefore a blog post topic.

This week: Apple’s Expanded Protections for Children. If you follow tech news, you’ve heard about it (probably a lot of wrong things, if what I’ve seen is any evidence), and you probably have an opinion on it, as, of course, do I. But my opinion and post are based on a week or so of reading things, pondering, and experimenting - not the quick hot takes that seem to pass for reporting these days. If you have a problem reading about child pornography (or, as the new term seems to be, Child Sexual Abuse Material/CSAM) and related filtering technologies, just close the page now.

CSAM? Huh?

Well, that was my first thought too. CSAM? Child Sexual Abuse Material? Apparently this is the new term for what, historically, would be called CP - Child Pornography. The old name is a bit more direct, the new name is… actually, last week was literally the first time I’d heard of it.

Anyway, this sort of material exists on the internet (which shouldn’t be a surprise to anyone), is quite illegal to possess in most countries, and there are some decently funded groups that try to ensure that it is, if not off the internet (which I would consider as close to impossible as anything one could consider doing with the internet), at least harder to find and driven to the dark corners of the internet involving terms like “bulletproof hosting” and “DarkNet” and “Tor” and such.

Anyway, Apple has decided to do something about it, in a range of ways, and has generally pissed off the internet in the process - or, at least, more of the tech circles than usual. Part of this is legitimate, part is based on extensive confusion of what they’re talking about, and part is based on what could happen out of it. Unlike a depressing number of journalists who have written on the subject (quite frequently wrongly), I took plenty of time with the source docs, dug through stuff, and have, as far as I know, a decent understanding of the system they’re proposing (at least as far as the public documents go). I’ve also gone about messing with the NeuralHash algorithm they’re using, and have some results from that project below.

Please. Use the Source Documents.

There is so much misinformation about this whole topic on the internet right now. If you want to actually understand what’s going on, please go read the actual source documents from Apple. They have an overview, and they have a bunch of PDFs linked at the end. You at least should read Expanded Protections for Children and CSAM Detection Technical Summary if you want to understand the issues more deeply than I cover here. Some information is also clarified in the FAQ added a few days after the initial documents.

And, continuing the trend of “No, wait, really, we swear we thought about this, look, another document!” that has been this release, after a week, Apple quietly added the Security Threat Model Review of Apple’s Child Safety Features document, adding quite a bit of detail to various policy aspects of what they’re doing - though it’s unclear as to how many of those policy changes existed at the time of the initial release, and how many were added in the last week in response to the criticism.

You should probably also read the report on the leaked internal memo that is the source of the phrase, “the screeching voices of the minority” - as you’ll see that term floating around a bit. That phrase isn’t used by Apple, but is from the National Center for Missing and Exploited Children (NCMEC), who is the source of the image hashes Apple is using, and obviously is approved by Apple, at least for internal distribution.

Anyway. Don’t trust what you read on the internet, especially around this topic, without verifying it with the source stuff. Hey, don’t even trust me without verifying it, though I’m certainly a week late and not in it for the clicks.

The Three Prongs: Siri, iMessage, Photos/iCloud

There are three distinct things being discussed by Apple here - and other than being under the same general umbrella, right now, they don’t seem to have any interactions with each other. This is where a lot of people have been getting it wrong.

-

Siri and search are getting “expanded guidance” on the subject of CSAM, reporting exploitation, etc.

-

iMessage is getting the ability to, on the device, detect nudes and, depending on the age of the user of the phone, report things to the parental phones, or just make the user click through a blur to see the image.

-

Before uploading any photos to iCloud, images are being scanned against a database of hashes provided to Apple by the NCMEC, with matches contributing to a somewhat novel crypto envelope system, with high match counts being reported to the appropriate authorities for action (after disabling the Apple account).

Specifically, the second and third do not interact - at least as far as I can find from the documents. So, in more detail…

Siri and Search Changes

The change that’s gotten the least coverage, because there’s simply not much to say about it, is that Apple is committing to do something or other with Siri and search to improve informational responses.

I can just quote the entire section from their document, it’s short:

Expanding guidance in Siri and Search

Apple is also expanding guidance in Siri and Search by providing additional resources to help children and parents stay safe online and get help with unsafe situations. For example, users who ask Siri how they can report CSAM or child exploitation will be pointed to resources for where and how to file a report.

Siri and Search are also being updated to intervene when users perform searches for queries related to CSAM. These interventions will explain to users that interest in this topic is harmful and problematic, and provide resources from partners to get help with this issue.

These updates to Siri and Search are coming later this year in an update to iOS 15, iPadOS 15, watchOS 8, and macOS Monterey.1

I’ll let someone else do their journalistic duty to ask Siri about child exploitation and such and see if the responses are any better than they are before the changes. I don’t use voice assistants (except to troll people who have them listening in their house - apparently Alexa can only order 10 tons of gravel, not 12), so… I really don’t have any strong opinions on this part beyond “I’m not going to be the one asking Siri about exploited children.”

iMessage Sexting Filters

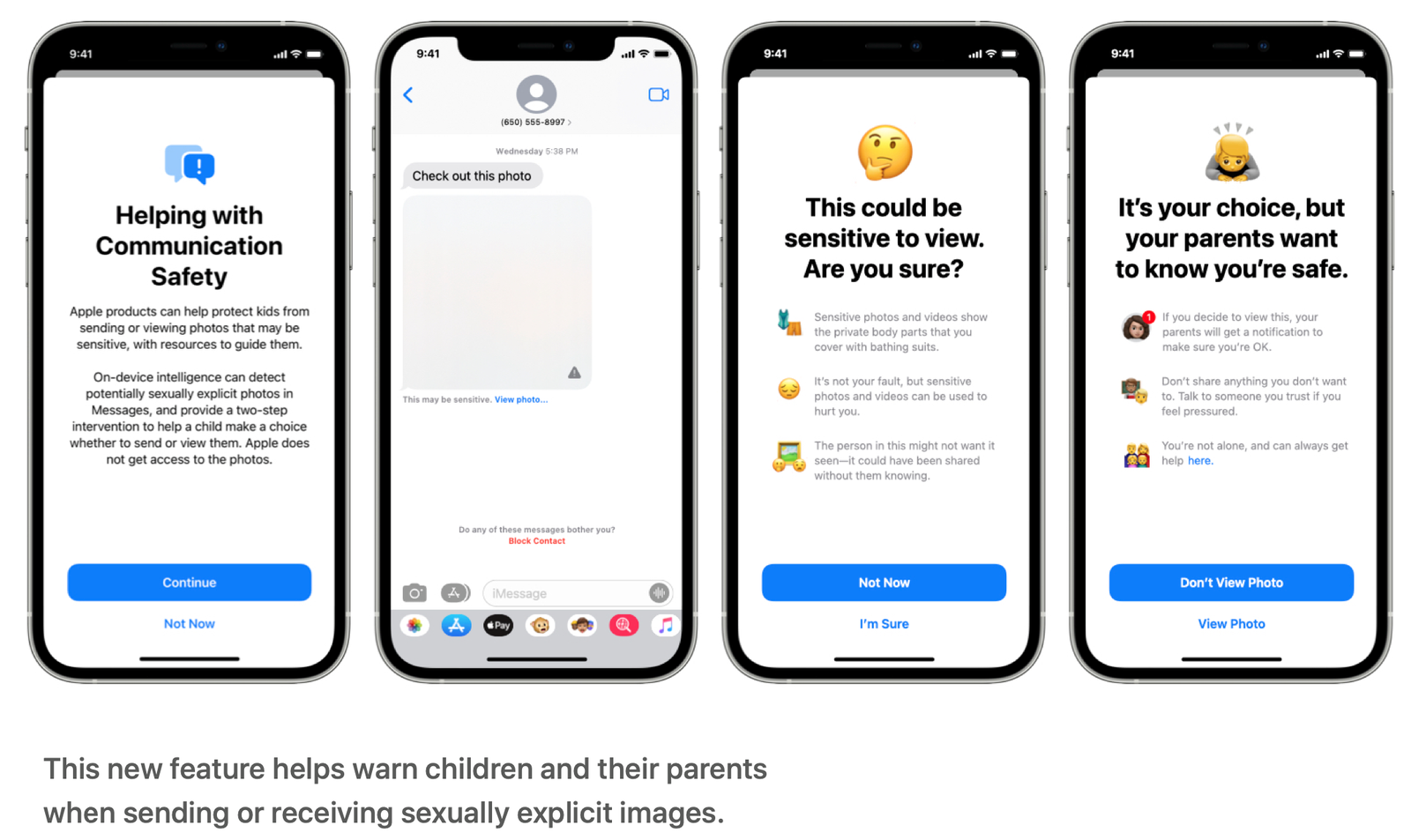

Apple is adding what amount to on-device, machine-learning based “sexting” filters that can be enabled (opt-in) for “child” accounts on phones (under-18). According to the FAQ, the “parental notification” bits can only be turned on for children under the age of 12 - so if your 13 year old is sexting, they’ll get the blurry bits and warning, but the parent doesn’t get any notification.

The FAQ is quite clear on this point:

Will parents be notified without children being warned and given a choice?

No. First, parent/guardian accounts must opt-in to enable communication safety in Messages, and can only choose to turn on parental notifications for child accounts age 12 and younger. For child accounts age 12 and younger, each instance of a sexually explicit image sent or received will warn the child that if they continue to view or send the image, their parents will be sent a notification. Only if the child proceeds with sending or viewing an image after this warning will the notification be sent. For child accounts age 13–17, the child is still warned and asked if they wish to view or share a sexually explicit image, but parents are not notified.

This is the part of the system with on-device machine learning, and based on previous attempts to machine-learning identify naked people (see Tumblr), I expect this to prove somewhat hysterical for groups of kids trying to figure out what the most absurd thing they can send their friends that shows up blurred is. It may also be of some use to parents who have no idea what their kids are doing on their phones, but I expect it to be reasonably easily bypassed by any creative kid who wants to sext, as it gives instant feedback about what is and isn’t flagged. We’ll see if the “machine learning” bit includes, “The 10 pictures you’ve tried to send in the last 5 minutes have all been flagged, maybe we should just go ahead and flag the 11th too…” sort of learning.

My preferred solution to the problem looks a lot like “There is no way my preteen has a smartphone and the house computer is quite visible,” but I expect this is an unpopular opinion on the issue.

The Threat Model document (a week later) confirms that the only thing sent to the parent’s phone is a notification - not the actual image in question.

For a child under the age of 13 whose account is opted in to the feature, and whose parents chose to receive notifications for the feature, sending the child an adversarial image or one that benignly triggers a false positive classification means that, should they decide to proceed through both warnings, they will see something that’s not sexually explicit, and a notification will be sent to their parents. Because the photo that triggered the notification is preserved on the child’s device, their parents can confirm that the image was not sexually explicit.

Again… I’m not going to test this feature. To the best of my knowledge, I have zero pictures of naked people on my phone, and I’m certainly not going to add some to see how absurd the machine learning is. It’s a bit old fashioned, to be sure, but if you’d rather not have pictures of your bait and tackle leaked on the internet, don’t take pictures of your Austin Powers Rocket Bits. At least not on your phone. Standalone digital cameras without WiFi are cheap now!

NeuralHash Scanning iCloud Uploads for Known CP

The last bit is the one that really has people up in arms (though moreso if they confuse it with the previous one, as seems common), and is the part that really pushes against a range of boundaries.

This is the part where, before any photo from your phone is uploaded to iCloud (which, I believe, is the default setting for a new device), it is scanned (on your device) with an image hashing algorithm against an encrypted list of “known bad images,” with the results hidden, obfuscated, wrapped with a couple layers of interesting crypto, and sent up to the cloud for later analysis.

This part does not use machine learning - it’s not looking for “Well, this looks like it might be a picture of a child in a bondage dungeon…” sort of images. It’s looking for very specific images that are “known bad material,” with a hash algorithm that is (supposedly) robust against things like resizing and small amounts of cropping (which I’ll dive into a bit later).

Apple’s initial documents on this focused very, very heavily on the crypto aspects and ignored the policy aspects - with later documents expanding on the policy side extensively, though how much you believe policy matters depends an awful lot on how much you know about the whole “National Security Letter with Gag Order” thing that goes on.

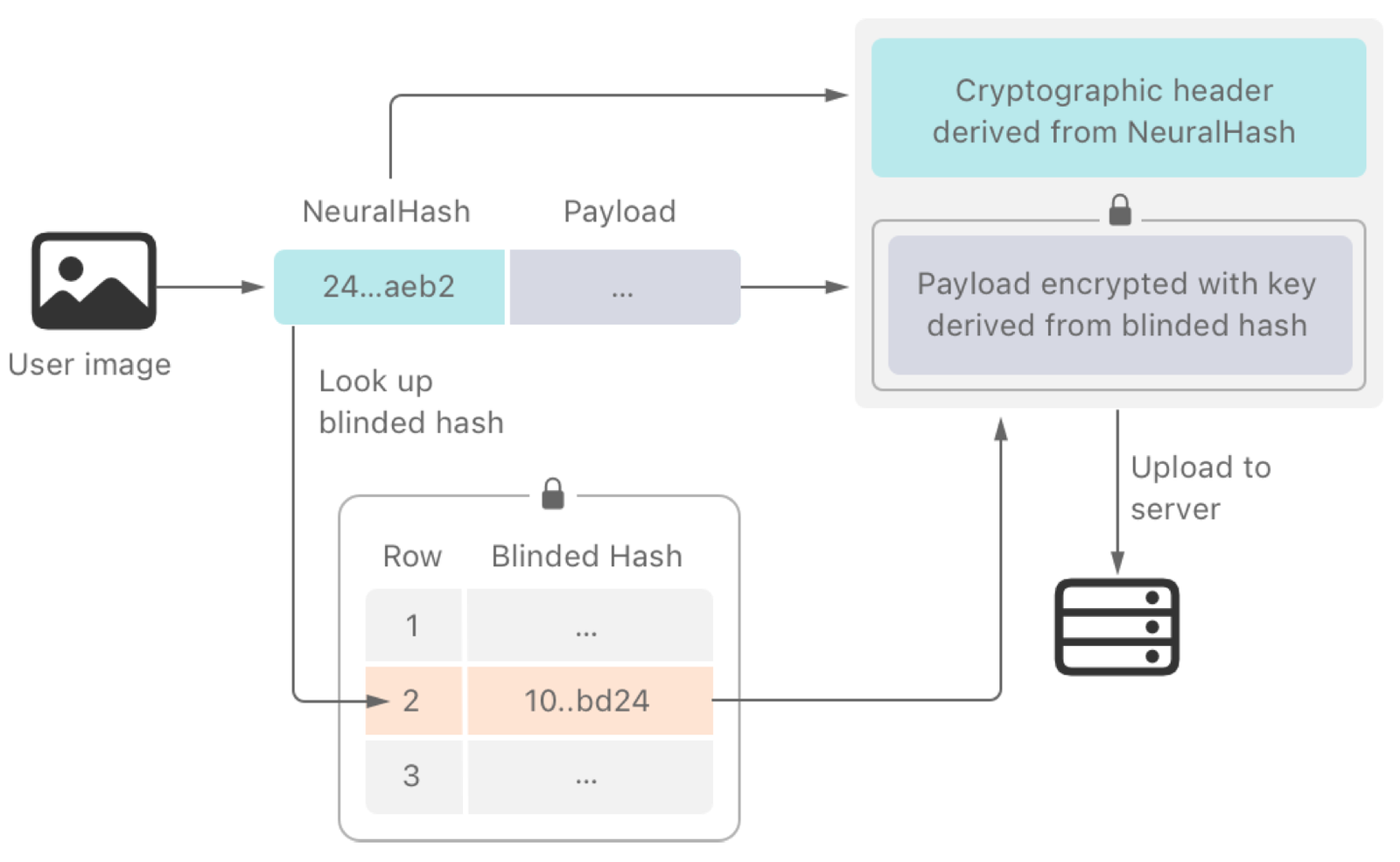

The process, in a nutshell:

-

Apple encrypts an externally provided database of “known bad image perceptual hashes” and embeds it in their OS.

-

Before uploading an image to iCloud, your device runs the perceptual hash algorithm on the image, comparing against the encrypted database in such a way that your device does not know if it matches, but the attached content can only be decrypted if there is a match.

-

Your device also packages some data derived from a decryption key such that if the remote server has enough of these blobs, they can assemble the decryption key. Enough is defined by Apple.

-

This whole package (image or a derived image - slightly unclear, and what Apple calls the “safety voucher”) is uploaded to the iCloud servers.

-

On some interval, the iCloud system compares the submitted safety vouchers with the hash database it knows about, finding matching images in accounts. This allows the system to attempt to generate a decryption key from the fragments, and if enough matching images are present, the fragments form the decryption key to then analyze the actual image content of all matching images.

-

These images are subject to a manual review process to determine if they are actually CSAM. If yes, the account is disabled and all relevant information is provided to the proper authorities.

I’m going to go into more depth in these steps below - but this is what the whole matching process looks like.

Apple is really proud of the crypto parts of it. They have the algorithm description (in the usual “means something to crypto experts” style document), and they have a couple statements by well enough known cryptographers that the system works as advertised. It’s a really odd document package for something like this, but it’s what Apple has chosen to focus on. I have my thoughts on this, but… first, the process in more detail.

The Source of the Encrypted Hashes

If some of the following stuff seems a bit confused and scattered… that’s only because Apple’s documents are confused and scattered. The later documents tend to add a lot of additional information that wasn’t present in the early documents, and I tend to believe this is because Apple is pretty much winging it right now, putting out responses to concerns on the fly based on what they think they might be able to do.

Remember: This system is only being deployed in the US right now. So:

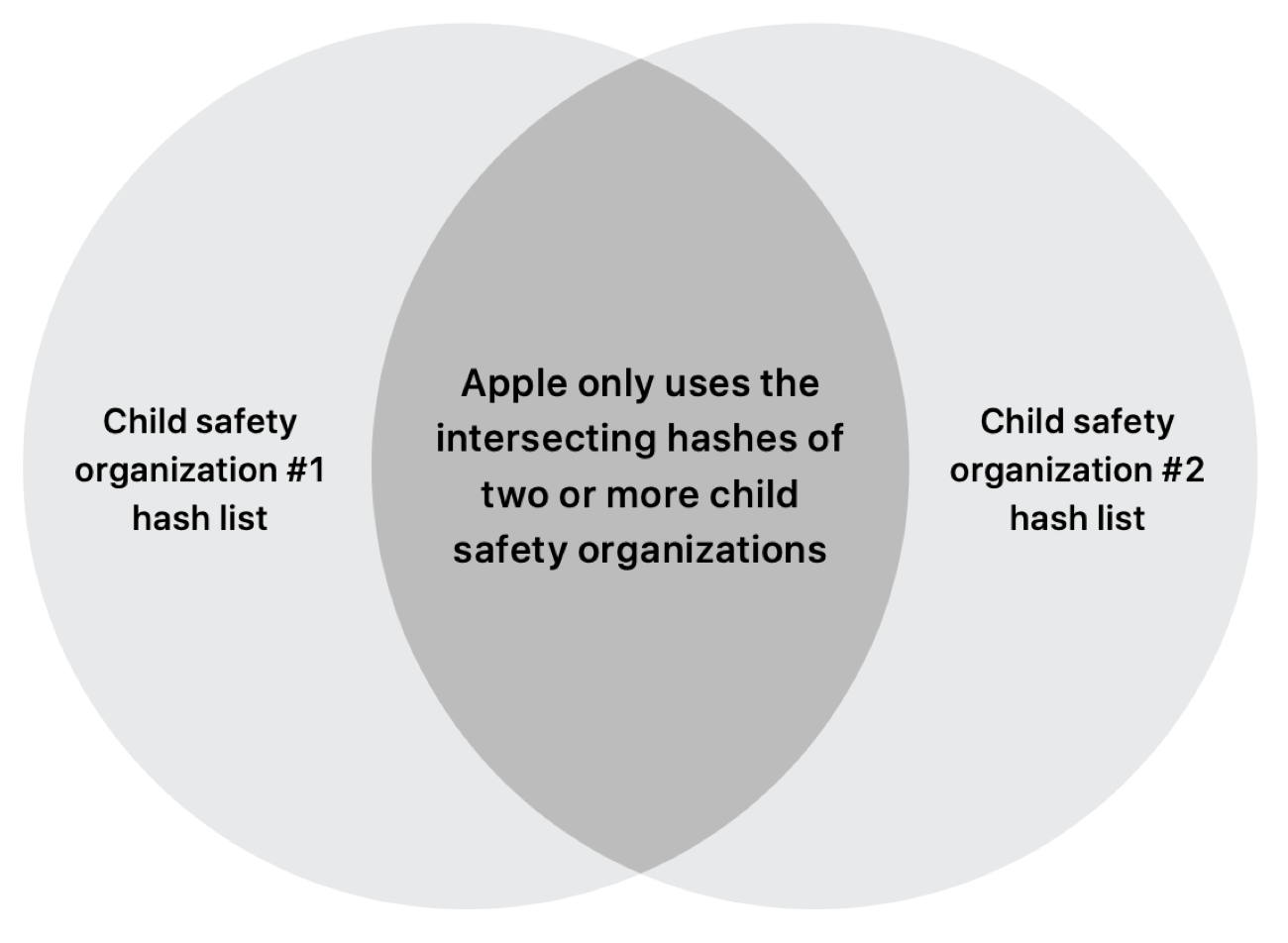

In the initial documents, Apple was pretty vague about where the perceptual hashes were coming from. The only source listed was NCMEC, though they suggested others might be adding hashes too.

Instead of scanning images in the cloud, the system performs on-device matching using a database of known CSAM image hashes provided by NCMEC and other child-safety organizations. Apple further transforms this database into an unreadable set of hashes, which is securely stored on users’ devices.

There were some very obvious concerns here about hash sources, and this wording certainly implies (though doesn’t outright state) that if other groups had hashes, they could get tossed into the pot as well.

A week later, in the threat models document, there’s a rather more specific claim made:

The on-device encrypted CSAM database contains only entries that were independently submitted by two or more child safety organizations operating in separate sovereign jurisdictions, i.e. not under the control of the same government.

And, clarifying later just to make sure it’s clear:

Apple generates the on-device perceptual CSAM hash database through an intersection of hashes provided by at least two child safety organizations operating in separate sovereign jurisdictions – that is, not under the control of the same government. Any perceptual hashes appearing in only one participating child safety organization’s database, or only in databases from multiple agencies in a single sovereign jurisdiction, are discarded by this process, and not included in the encrypted CSAM database that Apple includes in the operating system. This mechanism meets our source image correctness requirement.

This appears to be in response to the criticism that if you rely on one group for hashes, and that group is a closely government tied and government funded “NGO,” well… pressure could be applied, hashes could be added. This new requirement, that two different groups, in different countries, have to submit the same hash, mitigates this (I would expect the intersection is rather smaller than each country’s individual database). Though if one asked what other countries would consider submitting their CP databases to Apple to help police US citizens, the obvious answer would be someone in Five Eyes, which might make some people a bit uncomfortable…

In any case, Apple is clearly trying to ensure that people have some trust in the source of the hashes. They even talk about how an auditor could come into a secure room on campus and verify the results. Users will be able to verify the database hash on device, and (in theory) other people will be able to validate the generation of it.

This approach enables third-party technical audits: an auditor can confirm that for any given root hash of the encrypted CSAM database in the Knowledge Base article or on a device, the database was generated only from an intersection of hashes from participating child safety organizations, with no additions, removals, or changes. Facilitating the audit does not require the child safety organization to provide any sensitive information like raw hashes or the source images used to generate the hashes – they must provide only a non-sensitive attestation of the full database that they sent to Apple. Then, in a secure on-campus environment, Apple can provide technical proof to the auditor that the intersection and blinding were performed correctly. A participating child safety organization can decide to perform the audit as well.

Third Party Audits and Code Review

Before I continue any further, it’s worth mentioning that the threat modeling document includes the phrase “subject to code inspection by security researchers like all other iOS device-side security claims” a whopping five times. Is there some program for security researches to get the code to inspect I’ve never heard of? I’ve looked around and I sure can’t find anything of this sort mentioned anywhere.

NeuralHash: Perceptual Image Hashing

A standard hash function (MD5, SHA256, etc) takes some input stream of data and creates a fixed length output that is sensitive to any changes in the data stream - change one bit, you’ll change the output hash. Perceptual image hashing applies the same sort of concept to images: If the image is the same, even if it’s been resized or slightly modified, the hash is (supposed to be) the same.

It’s worth hammering home the point that this is not the machine learning stuff that is used in the iMessage scanning. This is image hashing. “Are these two pictures the same, even given some processing?” It’s not using machine learning to try and find out if an image is a problem, it’s looking to see if it’s a known image.

Apple is really proud of their NeuralHash algorithm, and goes on at some length about it in the initial description document:

NeuralHash

NeuralHash is a perceptual hashing function that maps images to numbers. Perceptual hashing bases this number on features of the image instead of the precise values of pixels in the image. The system computes these hashes by using an embedding network to produce image descriptors and then converting those descriptors to integers using a Hyperplane LSH (Locality Sensitivity Hashing) process. This process ensures that different images produce different hashes.

The embedding network represents images as real-valued vectors and ensures that perceptually and semantically similar images have close descriptors in the sense of angular distance or cosine similarity. Perceptually and semantically different images have descriptors farther apart, which results in larger angular distances. The Hyperplane LSH process then converts descriptors to unique hash values as integers.

The system generates NeuralHash in two steps. First, an image is passed into a convolutional neural network to generate an N-dimensional, floating-point descriptor. Second, the descriptor is passed through a hashing scheme to convert the N floating-point numbers to M bits. Here, M is much smaller than the number of bits needed to represent the N floating-point numbers. NeuralHash achieves this level of compression and preserves sufficient information about the image so that matches and lookups on image sets are still successful, and the compression meets the storage and transmission requirements.

The neural network that generates the descriptor is trained through a self-supervised training scheme. Images are perturbed with transformations that keep them perceptually identical to the original, creating an original/perturbed pair. The neural network is taught to generate descriptors that are close to one another for the original/perturbed pair. Similarly, the network is also taught to generate descriptors that are farther away from one another for an original/distractor pair. A distractor is any image that is not considered identical to the original. Descriptors are considered to be close to one another if the cosine of the angle between descriptors is close to 1. The trained network’s output is an N-dimensional, floating-point descriptor. These N floating-point numbers are hashed using LSH, resulting in M bits. The M-bit LSH encodes a single bit for each of M hyperplanes, based on whether the descriptor is to the left or the right of the hyperplane. These M bits constitute the NeuralHash for the image.

Got all that? Great. They totally nerded harder here, and want to show it off. It doesn’t matter a bit, what matters is how this black box neural thing performs.

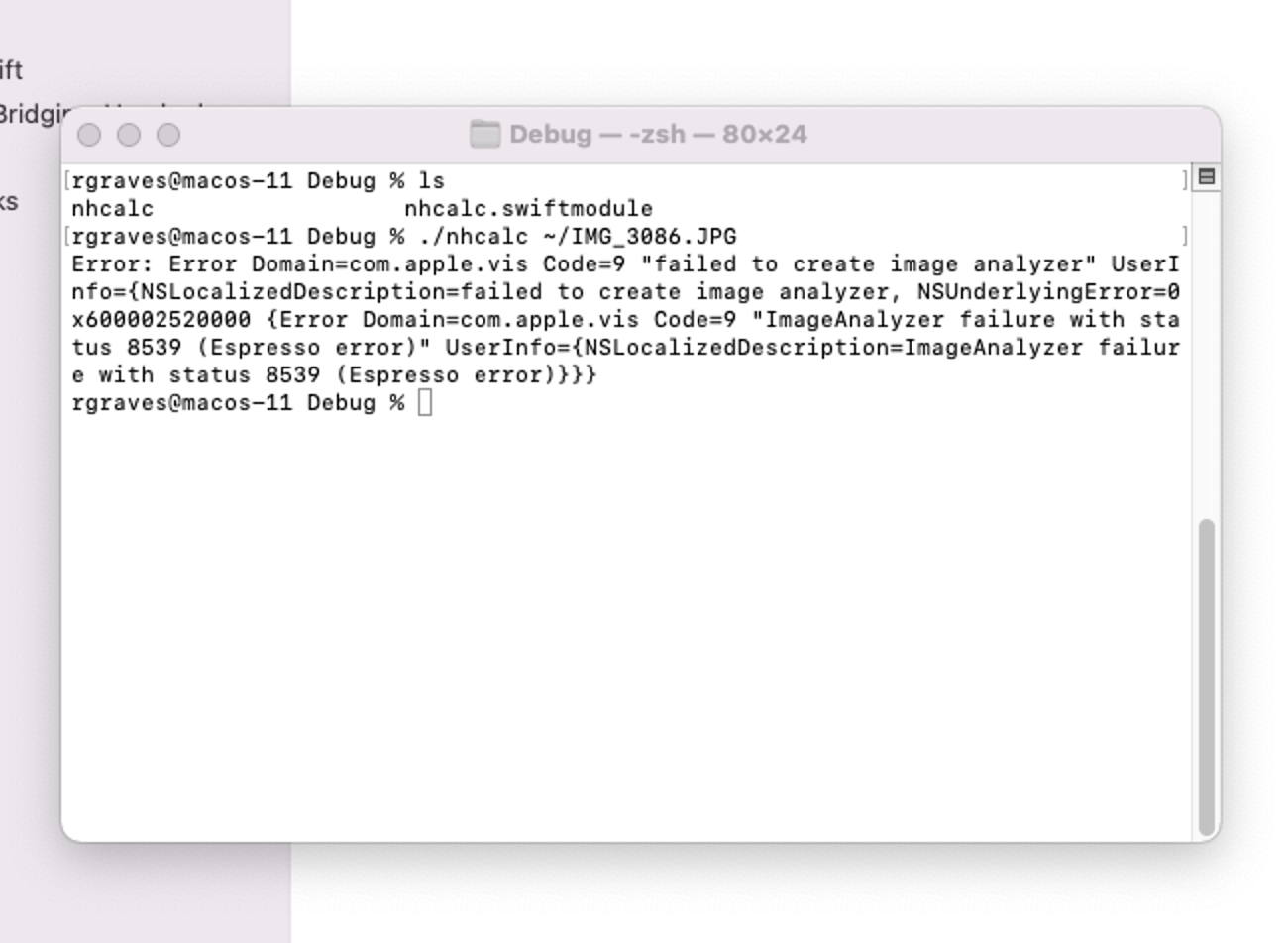

For that, we turn to nhcalc by Khaos Tian over on Github. This little utility calls into the core graphics libraries to compute a NeuralHash over an image. I tried it on the Monterey beta, but it won’t work there, so I’m using my 11.5.2 install for testing. Given how sensitive the crypto is to the hash output, I don’t think there’s going to be much in the way of changes to the algorithm before release, though obviously that will be verified in short order by other people on release.

My first question was about image reproducibility. If I took the same shot multiple times, would the hashes agree? So, I pointed my camera at our game cabinet, fired off a few shots with the flash, and hashed the images. For these sets of hashes, I’m going to be putting the actual image hash, then the difference to the “reference” photo in binary. If it’s all 0s, the hash is identical, otherwise it differs in the non-zero bits.

With flash:

6973587030527a64756a5a2b4b7a7950

6973587030777a64756a5a2b4b7a7950 (00000000002500000000000000000000)

6973587030527a2f756a5a2b437a7950 (000000000000004B0000000008000000)

So far, so good. But what if I try the same shot, hand held, with exposure locked?

616347325151704f6b42704253513737

616347325151704f6b42704253513737 (00000000000000000000000000000000)

625147325551704f6b42704236417266 (03320000040000000000000065104551)

Hm. Well, the first two images aren’t the same, but have the same perceptual hash, which is… surprising that it tolerates that from handheld shots. That wasn’t with a tripod. Useful data. All three shots are moved relative to each other, though the third shot is a bit more of a shift.

For the rest of my experiments here, I’m going to use a standard issue forensic cat. Sadly, this cat is no longer with us, having disappeared at some point.

4032x3024, 5.5 MB

67754679302f666177336b7a3834656e

The first claim of the algorithm is that it’s robust against resizing. Well. Let’s shrink it down until the hash changes. I’m just resizing it down in for a few common sizes I might use for a 4:3 image.

1600x1200, 1.2 MB

67754679302f66617733307a3834656e (000000000000000000005B0000000000)

800x600, 311 KB

67764679302f666177333079386f656e (000300000000000000005B03005B0000)

320x240, 53 KB

69764e79552f66617733307a3834656e (0E0308006500000000005B0000000000)

Um… well, that was weird. All I did was resize it down in a standard application (Preview) and the hash changed. As far as I can tell from the algorithm docs, any change in the hash makes the match invalid.

Under the logic that maybe cats don’t resize well (even though they seem to enjoy being stuffed in boxes), I tried the same experiment with a recent picture with some people in it. Results:

4032x3024, 1.2 MB (HEIC)

537471766c6737546a4735365476794a

4032x3024, 2.4MB (JPG)

537471766c6737526a4735365476794a (00000000000000060000000000000000)

1600x1200, 568 KB (JPG)

537471766c6737526a4735365476794a (00000000000000060000000000000000)

800x600, 192 KB (JPG)

537471766c6737526a4735365476794a (00000000000000060000000000000000)

320x240, 44 KB (JPG)

537471766c6772526a4735365476794a (00000000000045060000000000000000)

In this case, the initial conversion from HEIC to JPG caused two bit flips in the hash, which did remain as I resized down and exported to JPG, though things started to diverge at 320x240. But even a simple format conversion can break the hash.

What if we actually try to break it without disrupting the core of the image, which is the cat?

I’ve made a few slight crops to the image, just trimming edges. By about 95% or so, the hash starts to change.

4032x3024, 5.5 MB (original cat)

67754679302f666177336b793834656e

3976x2972, 5.4 MB (slight crop, ~98.5%)

67754679302f666177336b793834656e (00000000000000000000000000000000)

3809x2819, 4.9MB (slightly more crop, ~94.5%)

676d4679772f6561773330793834656e (001800004700030000005B0000000000)

So, very slight crops certainly will ignored by the hash algorithm. Any meaningful edit, though… no.

What if we play with colors? I tried a few things - going down to black and white, and then playing with levels a bit. Push exposure, pull exposure… they all change the hash. This is slightly darker than the original image.

Black and White

677546796c2f646177336b7a3834656e (000000005C0002000000000300000000)

Push exposure slightly

676e4679302f646177336b7a38346533 (001B000000000200000000030000005D)

Pull exposure slightly

67754679302f666177336b7a3834656e (00000000000000000000000300000000)

Maybe it’s just having trouble with the cat. Trying with the kid image, the same sort of slight exposure pull accomplishes a hash match, though pulling a bit more out (this is far from a seriously darkened image) blows the hash up by a few bits.

Original (JPG)

537471766c6737526a4735365476794a

Black and White

517475726c4262546a4f39364476794a (020004040025550600080C0010000000)

Push exposure slightly

537471766c6762546e4735365476794a (00000000000055060400000000000000)

Pull exposure slightly

537471766c6737526a4735365476794a (00000000000000000000000000000000)

Pull exposure slightly more

537471766c673752724735365476794a (00000000000000001800000000000000)

What if I try to add some decorations?

I added a few decorations in the corners, and the hashes still matched.

Adding a border, though. That did enough.

Original

67754679302f666177336b793834656e

Corners

67754679302f666177336b793834656e (00000000000000000000000000000000)

Borders

67754879782f6561773377792b34656e (00000E004800030000001C0013000000)

Now, if you want to go totally crazy… rotate the image.

Original

67754679302f666177336b793834656e

Rotated left 90 degrees

6969542f78503257372f4761776e5a6e (0E1C1256487F5436401C2C184F5A3F00)

Rotated right 90 degrees

6e6d446a78763156315867613039626d (0918021348595737466B0C18080D0703)

You get the point. Play with it yourself, entertain yourself by creating images. I expect, since the bit positions seem to correlate to parts of the image, one could probably reverse a small image from a hash (not that you’d get it in plaintext form on the device, but it would be a neat little bit of work).

I would describe it as “tolerant of some of the most minor changes possible, and utterly incapable of handling even the slightest directed effort at bypassing it.” A simple image rotation entirely scrambles it, though it would be easy enough for the source images to be rotated before hashing as well (at the cost of 4x the database size/count). However, I don’t think it matters. As I read the crypto bits, a single bit flip makes things “not a match.”

The Crypto Bits

Going from NeuralHash matches to something Apple can take action on involves some fairly interesting crypto that Apple seems very proud of - and this is what they seem to think is the key to the whole thing being totally fine to roll out.

And… reading through it, assuming their implementation isn’t braindead, I think it will do what they claim it will do, and I think it will provide the guarantees they claim it will - just, this is only true if the policies surrounding the crypto are solid as well.

Apple has covered the crypto in great detail in their documents, should you care to really dive in, so I’m just going to cover it at a high level. I’m already far longer than I’ve been trying to write lately…

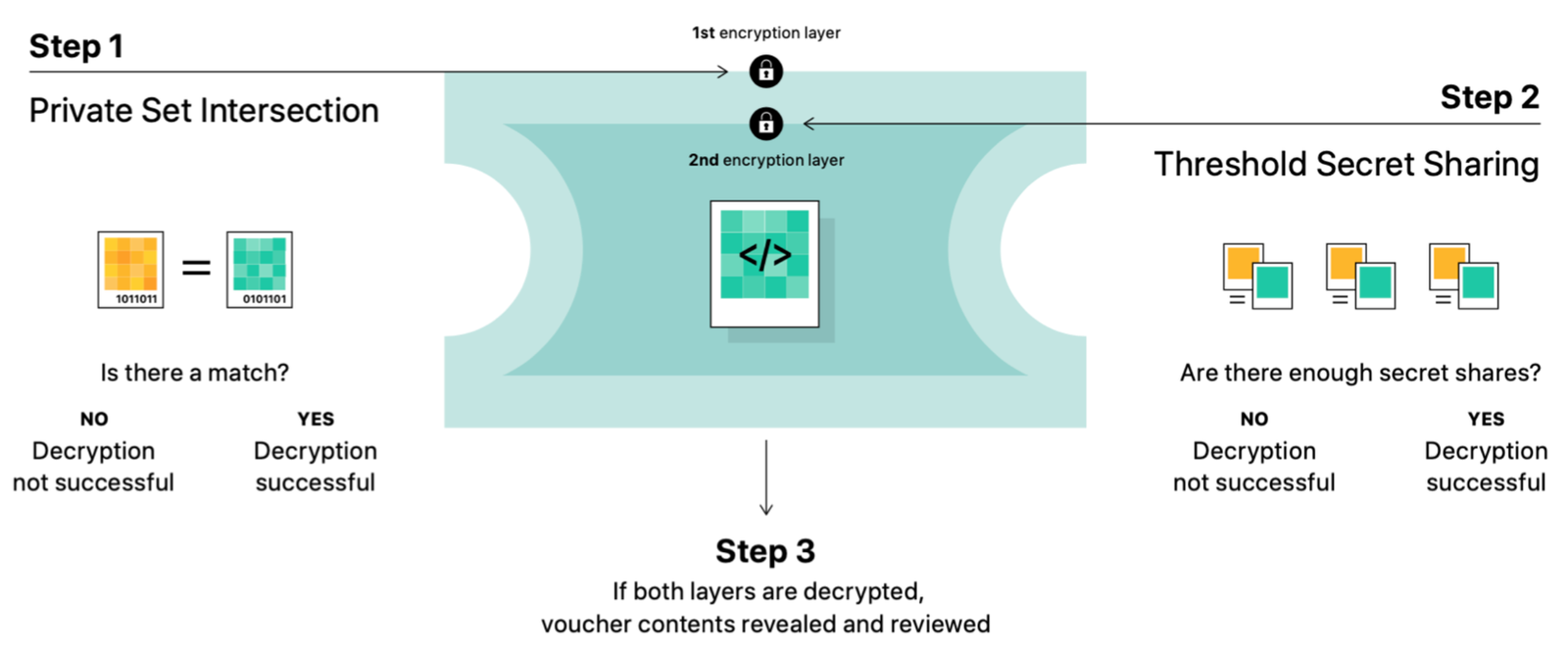

Private Set Intersection

The first part is the private set intersection (PSI). This bit of crypto allows you to take some hash output (the NeuralHash output), and compare it, “blindly,” with some list of bad hashes, encrypted such that the device doing the comparison cannot know the results of the operation. The phone is unable to know if there is a match between what it hashed and what’s in the bad list - only the server is able to do so, using the secret involved in the blinding step.

With this step applied, the server checks each uploaded image to see if everything matches. If the decryption process reveals nonsense, then the image hash didn’t match anything in the bad list. If the decryption process reveals valid crypto headers and image formats, then the server knows that the image hash matches a bad one.

However, Apple hasn’t stopped here. To prevent the server from being able to identify a single match, they’ve added another step - Threshold Secret Sharing.

Threshold Secret Sharing

The inner layer of the encryption uses a technique called “Threshold Secret Sharing.” This allows for some private key to be encoded in parts, such that if you have enough pieces, you can recover the key. If you have 1000 fragments, you can set the parameters so that you can recover the key with any 10 of them - or any 30, or whatever you want.

By doing this, Apple can identify match counts, but not actually decrypt the attached image data until some threshold count has been passed.

Synthetic Match Vouchers

To prevent even being able to reliably identify the count of matches, Apple has also added some “false positives” with garbage data into the system. Every so often, the system will create a false match (using PSI), but with garbage data for the Threshold Secret Sharing part - so it appears to be a match, but if you have 9 true positives and one false positive with a threshold of 10, you still can’t recover the secret. Or so Apple claims. Others disagree. Without being able to experiment with the whole system, it’s impossible to say for sure.

The combined system requires some number of PSI matches, with some number of valid TSS entries, in order to be able to decode any of the image content matching hashes.

In the threat modeling document, Apple implies that they will probably set a threshold of around 30, but reserve the right to adjust it.

Building in an additional safety margin by assuming that every iCloud Pho- to library is larger than the actual largest one, we expect to choose an initial match threshold of 30 images. Since this initial threshold contains a drastic safety margin reflecting a worst-case assumption about real-world performance, we may change the threshold after continued empirical evaluation of NeuralHash false positive rates – but the match threshold will never be lower than what is required to produce a one-in-one- trillion false positive rate for any given account.

It’s unclear how changing this threshold value would impact existing matches, and this is likely to be implementation specific.

Again, I’ve just glossed over this, and if you want more details, including some proofs of correctness, Apple has published them for you to poke through. I’m not currently skilled enough in the art of deep crypto to be able to do anything more in depth than look over them and say, “Yes, this looks like a reasonable set of things to do.” I’m willing to bet there will be some interesting papers on the ways this breaks, though I wouldn’t bet them breaking the core guarantees of the algorithm.

My analysis of the process also indicates that it’s almost certainly using standard cryptographic hashing operations - that a single bit flip in the hash makes a hash “not match.” There’s no mention of any sort of fuzzy hashing process between the NeuralHash output and the database of bad hashes, so I have to assume those single bit flips I found from altering images will make things “not match.” It’s certainly possible that they have found a way to handle “close” hashes, but it doesn’t seem to be evident in any of the documentation.

The Manual Verification Process

Finally, if there are enough matches, Apple claims that a manual verification process will be performed on the matching images to verify that they’re CP.

From the Threat Modeling document (which goes into far greater detail than the previous documents):

Once Apple’s iCloud Photos servers decrypt a set of positive match vouchers for an account that exceeded the match threshold, the visual derivatives of the positively matching images are referred for review by Apple. First, as an additional safeguard, the visual derivatives themselves are matched to the known CSAM database by a second, independent perceptual hash. This independent hash is chosen to reject the unlikely possibility that the match threshold was exceeded due to non-CSAM images that were adversarially perturbed to cause false NeuralHash matches against the on-device encrypted CSAM database. If the CSAM finding is confirmed by this independent hash, the visual derivatives are provided to Apple human reviewers for final confirmation.

The reviewers are instructed to confirm that the visual derivatives are CSAM. In that case, the reviewers disable the offending account and report the user to the child safety organization that works with law enforcement to handle the case further.

They do recognize the weaknesses in the hashing algorithm used here - that someone could make innocent images match “known bad” images, if someone had that set of hashes (which I assume is floating out there somewhere, either in the form of the hashes, or in the form of the original images that could be hashed).

If they’re convinced that it’s CP, your account is disabled and law enforcement is brought in. I would expect some people to very, very carefully experiment with this process after release in ways that make it very difficult for them to actually be found. VPNing into the US, while spoofing location, from a very foreign country seems a decent start to experimenting with the process.

On the other hand, there are groups focused enough on “lulz” who might simply decide it’s good sport to overwhelm Apple’s manual review process entirely, and find ways to just spam the system endlessly.

How this works in practice is yet to be seen.

If Governments Come Knocking…

One of the original concerns (rightly so) is that various governments could simply say, “Hey, listen, we know you’ve got this thing that can scan images, so, would you be so kind as to add this list of known dank memes involving a certain honey loving bear being compared to a national leader? And maybe set the threshold to 1 for us?”

Apple, in the FAQ, responded with a strongly worded statement:

Could governments force Apple to add non-CSAM images to the hash list?

No. Apple would refuse such demands and our system has been designed to prevent that from happening. Apple’s CSAM detection capability is built solely to detect known CSAM images stored in iCloud Photos that have been identified by experts at NCMEC and other child safety groups. The set of image hashes used for matching are from known, existing images of CSAM and only contains entries that were independently submitted by two or more child safety organizations operating in separate sovereign jurisdictions.

In the Threat Modeling document, they expand on this somewhat, claiming that both the list is part of the immutable OS image (so can’t get special content for individual users), and that the chain to generate it will be auditable:

Distribution of the perceptual CSAM hash database

The perceptual CSAM hash database is included, in an encrypted form, as part of the signed operating system. It is never downloaded or updated separately over the Internet or through any other mechanism. This claim is subject to code inspection by security researchers like all other iOS device-side security claims. Since no remote updates of the database are possible, and since Apple distributes the same signed operating system image to all users worldwide, it is not possible – inadvertently or through coercion – for Apple to provide targeted users with a different CSAM database. This meets our database update transparency and database universality requirements. Apple will publish a Knowledge Base article containing a root hash of the encrypted CSAM hash database included with each version of every Apple operating system that supports the feature. Additionally, users will be able to inspect the root hash of the encrypted database present on their device, and compare it to the expected root hash in the Knowledge Base article. That the calculation of the root hash shown to the user in Settings is accurate is subject to code inspection by security researchers like all other iOS device-side security claims.

So, taken at face value, this does imply that Apple is attempting to prevent use of the CSAM database for other things. Though, national security letters with gag orders are a thing, and unless regular audits are performed (may I suggest Bruce Schneier as a trustworthy person to audit these?), I don’t have amazing confidence that this will remain only a CP database over time.

Read the Source, Dood!

Again. Read the source documents. Please. There is so much misinformation about all this on the internet right now, and Apple’s statements have been expanded over time. If you want to discuss details, I expect you to at least understand what Apple’s directly stated claims are.

Now. Onto the speculation. I speculate, with my little microarchitecture…

Why?

The obvious question here is, “Why on earth is Apple doing this?” Over the last week, it’s been exceedingly clear that they’re going to do this - it’s not a project idea they’re floating. This has been announced, more or less fully formed, from the forehead of Apple, and it sounds like it’s part of the next Apple operating system releases - like it or not.

They’ve also burned an exceedingly large amount of “privacy capital” on this - the backlash on the internet has been strong, sustained, and at least somewhat justified.

I find it somewhat hard to believe that they’ve just done this for the sake of doing it. It’s far more likely that they have their ears to the ground and hear the approaching hooves of something - either that they were told “You should do this or something very unfortunate is going to happen to your company,” or that they think this is likely enough to have tried to get ahead of the process.

The obvious paring with this sort of idea is full end to end encryption of data in iCloud Photos - that in exchange for this on-device scanning, they’re able to deploy a much stronger set of protections for other photos that are warrant-proof. Except… Apple hasn’t said anything about this. Even as they’re being raked over the coals for this, they’ve not mentioned a thing about future iCloud encryption options that would increase privacy. So, right now, the only conclusion one can make is that this is an “and” sort of system - “What we can do right now, and this new thing.”

Will it Work?

Do I think the system will work as advertised? If you assume that there exists some set of people who will use an iPhone with iCloud Photo backup enabled, who download CSAM on their phone directly from websites that serve it unaltered, that exists in the intersection of multiple country’s hash databases, add it to their photo library, and let it be uploaded, then, yes, I do think it will properly flag those accounts.

That’s an awful lot of ifs in a row, though. And they’re now at least mostly known.

Apple has been exceedingly clear that if you turn off iCloud Photo backup, they don’t scan anything. We’ll have to see the implementation code and some analysis work to verify, but they’ve been really, really clear on this - so, presumably, your on-device library is not going to be scanned without uploading (at least initially).

If websites serving content make slight alternations to the images before serving, that will alter the hashes enough that they no longer match. Rotating them 90 degrees is more than enough to totally scramble it. Or if users do this, then, again, the hashes won’t match.

I have to assume that people downloading CP have at least a slight clue that it’s not exactly acceptable, and that they might not want to back the images up to the cloud. On the other hand, there’s no shortage of “dumb criminal” stories about people who do things like leave their license plate or ID behind at the scene of some attempted crime or another.

The big gaping hole in this mechanism is that it can only catch known content. If you have something newly created, it won’t be in the “known databases” yet. I’m unclear as to if the system will go through and retroactively hash images on the device if the hash database is updated, or if it only will apply to new images. This is the biggest hole in the system as I see it, and puts it in the category of “Apple was told to do something, and this is something.”

Other Wacky Ideas…

Could this be a sort of Schindler Gambit? The FBI was whining a few years back about how, of course secure backdoors are doable, Silicon Valley just needs to, like… nerd harder, or something.

This looks a lot like “nerding harder,” and they’ve got the crypto papers to prove they did so.

If it doesn’t work (which it doesn’t seem terribly likely to), then they can say, “Look, you told us to do this sort of thing, we did, and it doesn’t work. Criminals aren’t stupid, now how about you go do some legwork instead of demanding we do all it for you?”

I don’t think this is at all likely. But it’s an interesting possibility to ponder.

Do I Like It?

Now, do I like this? Not at all. The crypto may be solid - we’ll have to wait for someone to play with the isolated implementations to figure this out, because the problems with crypto are almost always the implementations, not the concepts. But I simply don’t trust all the policies required to keep the crypto working securely - an awful lot of it relies on Apple’s saying “Well, we just won’t do that if someone asks.” Quite a few companies over the years that have said, “We won’t X…” tend to break under sustained legal pressure from governments. A rare few simply burn down the whole company instead of cooperating, but I don’t expect Apple to do this. They’ve bowed to China over iCloud, they will bow again. To borrow a well known joke, “Oh, we know what kind of a company you are. Now we’re just haggling about price.”

I view this as a step change in “bad content” detection. It moves from “Cloud providers using their servers to detect bad things” to “My device is being used to detect bad things before going to cloud servers.” Even if Apple does enable E2E encryption for photos, I consider this “using my device against me.” It’s running code that does not benefit me, using my energy, under the assumption that I’m guilty until proven innocent by a lack of hash match. On top of that, I don’t think it’s very likely to work.

I’m entirely aware that mobile devices frequently run code that doesn’t benefit me (the standard adware/tracking toolkits), and I have done my best to avoid that sort of crap on my devices. I consider this to be a far more insidious form of crap, being built into the OS.

Even though I don’t use iCloud Photos, I’m very much opposed to the basic principle of on-device scans for someone else’s definition of badness. And I’m willing to bet that it doesn’t stop with this point - whoever is applying pressure will whine that it’s not doing enough, and the scope will creep, and creep. Slowly, such that the backlash can be managed over time, but still creeping.

Also, Apple has made it clear that at least some of these technologies are coming to future version of MacOS. We’ll see where that ends up, though, ideally, I can rely on other people’s experimentations. I’m not going to be doing much, because…

What I’m Doing

Am I going to whine about not liking it, and do what most people seem to be set on, which is going “Well, yeah… but Apple is super convenient, I might think about it at some point when I have to buy a new device… ugh… moving would be a pain…”?

Nope.

It’s not just this. I’ve been increasingly uncomfortable with the modern tech ecosystems, and Apple in particular, for a while now. I don’t like what’s still going on with Foxconn (“Dying for an iPhone” is a good read here), and I’m increasingly uncertain that it’s even possible to use modern consumer electronics ethically with how user-toxic the entire system is becoming (Windows 11 Home not allowing offline accounts is another data point here). I’m pretty sure that the right answer is not to lean further into it, and for a variety of reasons, this, to me, serves as the “kicker” to push me over the edge and really drive me out.

Dear Apple: It’s not me, it’s you. I don’t know what you’re doing, but I don’t like a bit of it, and I’m not going to stick around to find out where it ends. I’ve found something new to be my phone, and it’s blindingly yellow. And curved.

I will be one of those “screeching voices of the minority” who objects to the concept enough that I will move myself away from Apple devices.

I’ve already purchased a non-Apple, non-Android phone that I’m using as my primary mobile device (that I’ve been de-fanging my phone for years now makes the transition somewhat easier than it might be for some), and I’m going to be getting rid of Apple hardware rather rapidly as primary use hardware, not buying new Apple hardware, removing as much information as feasible from my accounts, and figuring out how to not give Apple any more money for hardware or software.

Is this going to be a trivial change? Nope. I’ve been an Apple user since… oh, 2003 or so? Titanium Powerbook era. I’ve had plenty of iOS devices over the years. I stood in line for the iPad when it came out.

And I think this era simply has to end. I’m not OK with where Apple has clearly chosen to go, and if there’s zero financial impact to them, well… that’s just a message that they can stare down the “screeching minority” and win. They’re welcome to try. I’m not going to help them.

I’m entirely aware that I’m giving up a very nice, well integrated platform, and wandering into the weeds of “Stuff that sort of works, maybe.” I will probably end up with some mostly unused and limited-data Apple devices for some tasks (drone operations are one, at least until the drone ID requirements get finalized at which point I may be done with those), but… I’m just going to take the opportunity to head down the path I’ve been experimenting with as an alternative, which is a lot of weird little ARM devices. There are certainly going to be things I can’t do on them, and I’ll either find alternatives or simply not do them.

Expect more on this transition over time.

Comments

Comments are handled on my Discourse forum - you'll need to create an account there to post comments.If you've found this post useful, insightful, or informative, why not support me on Ko-fi? And if you'd like to be notified of new posts (I post every two weeks), you can follow my blog via email! Of course, if you like RSS, I support that too.