If you’ve been paying attention to the news recently, you might be aware that the FAA has grounded the Boeing 737MAX series airplanes, pending investigation into what appear to be MCAS-caused crashes.

Since I’m a (private) pilot, and have more than a slight interest in automation systems, a few people have asked my opinion of the whole situation. Of course, this being me, the whole thing scope creeped badly into a post containing more than a few thoughts on Boeing, Airbus, control system design, automation, complexity… the works.

So, keep reading and let’s dive in.

What the Heck’s an MCAS?

Since it’s in the news, I’ll start with MCAS - a system that I think is quite poorly designed. That pilots weren’t made aware of it… well, really, my opinion here doesn’t matter too much, because the courts will work it out, and I sure wouldn’t bet on Boeing there.

MCAS (Maneuvering Characteristics Augmentation System) is a system that works around a quirk in the handling of a 737MAX to, on paper, make it fly exactly like the older -NG 737s. In certain flight conditions (flaps up, high angle of attack), the mounting of the new engines can cause the plane to rapidly pitch up - increasing the angle of attack (angle between airflow and the wing - higher angles of attack make more lift, right up until the airflow detaches, the wing stalls, and you head down in an awful big hurry). The MCAS system attempts to eliminate this unwelcome behavior by catching this condition and trimming the horizontal stabilizer (the tail fins on either side that regulate pitch) to push the nose down. If everything works, this is fine - it’s software dealing with hardware problems, and the last 50 years has a laundry list of software dealing the hardware problems, and an awful lot of hardware dealing with software problems too.

WHY does this system need to be in place? Common type ratings (and, perhaps, meeting FAA handling requirements). If you’re a pilot, your license allows you to fly some categories of airplane with nothing but a checkout by a qualified instructor. In the US, this includes pretty much anything single engine and under 12,500 lbs gross weight. I don’t need a separate FAA entry to fly a Cessna 152, 172, or 182 - they’re all “Aircraft, Single Engine, Land.” I should get a checkout, which involves reading the manual, going up with an instructor, and them generally ensuring I’m familiar with quirks (the 182s are nose heavy on landing and float if you fly book speeds while light, for instance) and won’t damage myself or the airplane (for instance, if you’re landing a 182 with a forward CG and the yoke isn’t in your lap, you’re likely to bang the nosewheel on the runway, which isn’t good). But I don’t need any specific training, and I don’t have a “Cessna 182” rating mentioned anywhere in the FAA databases.

For anything heavier, though, you need a type rating. This is aircraft-specific training that generally covers how to safely fly a particular airplane that’s a good bit more complex than a basic single engine airplane. I couldn’t just go jump in a Boeing 737 and fly it - though if I had a multi-engine rating and a commercial license, there are places that will happily take my money for a type rating. In some cases, you’ll find a “common type rating” - aircraft that are similar enough that a single rating covers multiple different airplanes. The Boeing 757 and 767 share a type rating, which means once you have it, you can fly either one without additional training. The various 737s share a type rating - which means you only need some basic transition training to switch between them. If you’ve seen (probably outraged) news about a “one hour iPad course,” that’s transition training in action - and, normally, it works pretty well.

Boeing, though, didn’t really feel the need to mention the MCAS system. They felt that it was just a quiet way to make a new jet fly like an old jet, that if it misbehaved, existing procedures for a trim runaway would probably cover it, and that it wasn’t a problem. They were, quite clearly, wrong.

But why did they need it in the first place? Why were there goofy aerodynamic corners on an old airframe? The engines.

Jet Engines: Bigger is Better

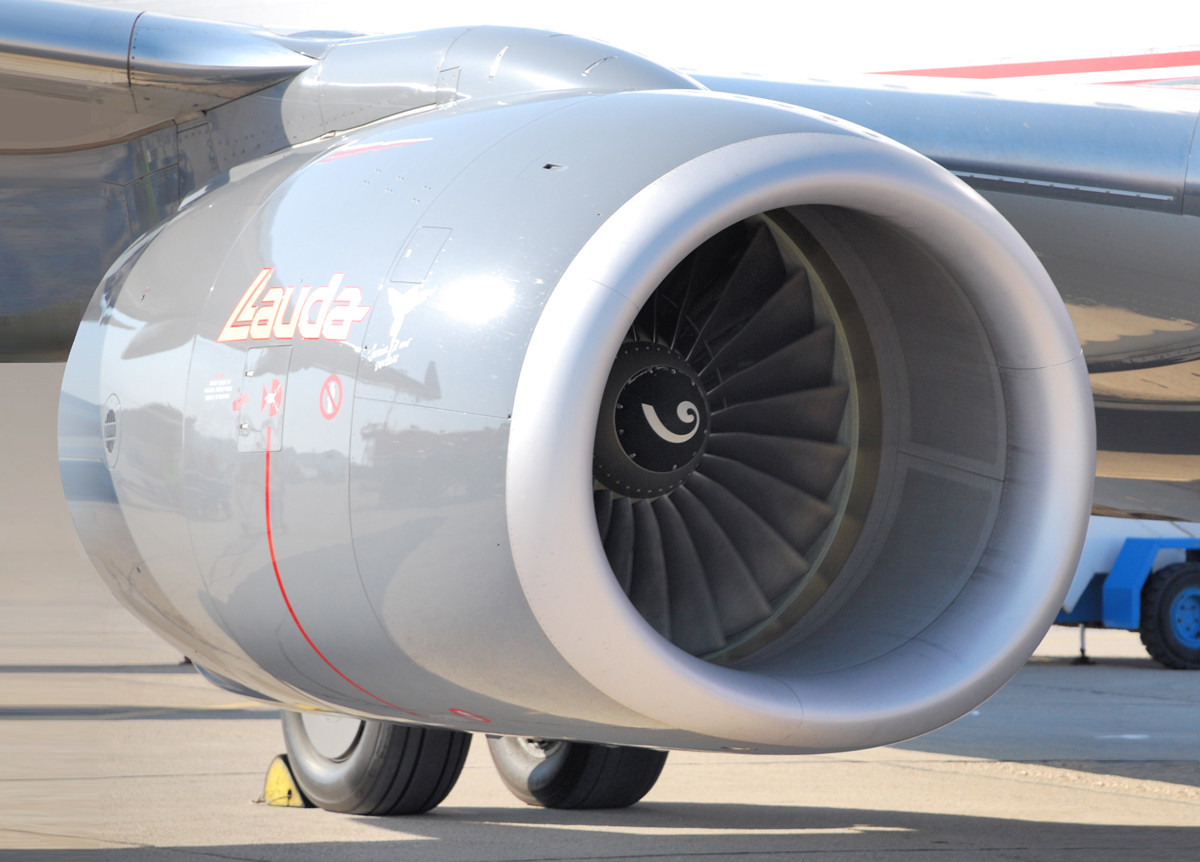

For a huge number of reasons, “Bigger is Better” when it comes to modern jet engines. Most people think a jet engine is pretty simple - cold air goes in the front, hot air goes out the back, and you get thrust. It’s true - but modern (transport category) airplanes don’t fly a straight turbojet, because they’re dreadfully inefficient - and also incredibly loud. You can build a small turbojet (the RC jet folks fly them), but the efficiency is beyond awful. Larger ones are better (the tolerances remain roughly the same as the diameter increases, which means a smaller percentage of the flow is lost around the tips), but no modern airliner has turbojets. They use turbofans - which are way, way more efficient.

Now, there’s something to be said for a turbojet, preferably afterburning, in the morning. A flight of 4 F-16s taking off beats the pants off any cup of coffee on the planet, if you’re into that thing. However, most people living near airports seem not to be, and most airlines aren’t taxpayer funded. They use turbofans.

A turbofan is a jet engine with a really, really big set of blades in front of the engine (these are what you see from the terminal, and the actual jet engine is a small core inside). The core of the engine mostly serves to turn the fan blades. The fan blades push air around the outside of the engine, and you get “a lot of air, moving a bit faster than the plane,” which is far, far more efficient than “a little bit of air, moving way faster.” On the -MAX, only 12% or so of the air goes through the core of the engine. The rest goes around the outside.

But. To do this, you need a big fan. The 737 was not designed for a big engine. It was designed for a very long, fairly skinny engine - and this is evident in the old -200s. I may have asked, as a child, if the engines had afterburners, because the nacelle was just too insanely long for anything else. Sadly, no, they’re not afterburning. The short field performance would be awesome, though perhaps a bit hard on some runways.

To fit bigger engines, Boeing moved the engine nacelles forward, and up. To maintain ground clearance, they put all the various accessories (fuel pumps, generators, starters, etc) on the side of the engine (instead of underneath), and ended up with a slightly goofy looking intake that’s not exactly round. Bigger engine, better efficiency, lower fuel burn. Awesome! This engine powered quite a few 737s over time.

To fit the new, even bigger engines in the MAX, Boeing… moved the engines even more forward, and even more up. You maintain ground clearance this way, but the engines now are far enough forward, with enough surface area, that they’re basically wing extensions. Get the nose up far enough, and these suckers will start creating enough lift enough to cause problems - and that’s what MCAS is designed to prevent.

If a Single Sensor Lies, You Die

And now, sadly, I get to explain how MCAS has (likely) put one or two planes full of people into the ground. This is all speculation for now, and until the NTSB or appropriate reports come out, treat it as such.

Boeing, for reasons I cannot explain, decided that this particular system should only use one angle of attack sensor. The 737 has two of these sensors - one on each side. The MCAS system only uses one (some sources say the captain’s sensor, some say it alternates between the two sides from flight to flight). If the sensor is malfunctioning, the system, apparently, has no way to detect this and do something reasonable. Instead, it listens to the lying sensor and tries to shove the nose down. The system is supposed to only trigger until the angle of attack is reduced, but if you throw a lying sensor in (that, say, always says you’re at a very high angle of attack), the system begins running on the edge case behavior, which is really quite pilot-hostile.

It will trim nose down for 10 seconds (acting like runaway trim, which is something pilots train to deal with), then pause for 5 seconds - but then start right up again, and throw in another giant ball of nose down trim. Repeat until either the pilots figure out what’s going on and take the correct actions (about a system they didn’t initially know existed), or you run out of airspeed, altitude, and ideas. Though, with nose down trim like that, you’re not going to run out of airspeed before you run out of altitude.

When a pilot responds with manual trim commands to correct the out of trim condition, the MCAS system will cut out - but since the incorrect conditions that caused it to trigger (a bad AoA sensor) still exist, it will quietly wait a few seconds, then proceed to try to shove the nose over again.

Worse, some of the trim cutout switches don’t apply to the MCAS system. The control column has cutout switches installed to deal with trim runaway conditions, and they act reasonably. If the trim system unexpectedly starts trimming the nose down, and the pilot pulls back on the yoke to correct the sudden nose down movement, the control column switches disable the nose down trim - and, as long as you’re pulling back to keep the nose where it should be against the trim, the runaway trim remains disabled while you sort out the details.

But, with MCAS, since the whole point is to shove the nose down, it ignores those switches. The MCAS system essentially says, “Well, yeah, the pilot is trying to pull the nose up, but I think it needs to go down, so I’m going to keep trying to get it down despite the pilot’s inputs.”

If you know what MCAS is, and respond properly in what may be an increasingly unflyable airplane, you can disable the system, get trim back to where it needs to be, and do so before running out of altitude, you just have a few uncomfortable moments in the flight, and write something up. But, if it triggers at low altitude (which is the case in both of the recent crashes), there’s simply not much time left to figure out what’s going on. Empirically, some flight crews don’t work out the details in time - and the CVR (cockpit voice recorder) transcripts will be very interesting to read.

This is a very un-Boeing-like system behavior, and I still can’t figure out why they designed it this way, because Boeing’s design philosophy has always been (and remains), “Let the pilot break the airplane, because they’re Pilot in Command, not the airplane.”

This is also a great case for adversarial system analysis and review. Any system, really, should be subject (in the design phase, but also as implemented) to people who ask, “How can I make this system misbehave in the worst possible way?” For an aircraft, plowing into the ground is a pretty bad result - and “Ok, what if the AoA sensor fails and just lies about 20-25 degrees nose up?” would be a reasonable question to ask in such a review. You might decide that, with two sensors, you should cross-check them and not repeatedly shove the nose down if they disagree.

Control System Philosophy: Boeing vs Airbus

I mentioned that this seems like an un-Boeing system. That relates to the general control system philosophy on modern airplanes.

Both Boeing and Airbus aircraft have computers that monitor things, and have their own opinions of what limits are. They’ll communicate this to the pilots through various mechanisms (backpressure in the controls, stick shakers, warning buzzers, etc) - but the difference is how they handle pilot input “beyond the edge of the envelope.” Airbus is simple: they don’t let the pilots break the airplane. If the computer thinks an input is a bad idea, it simply refuses to listen. Boeing, on the other hand, takes the opposite approach: the pilot has the final say, and if the pilot wants to do something the computer thinks is a bad idea, well, they’re the pilot, not the computers. And the airplane will obey the commands. There’s probably a very valid reason the pilot wants to pull a couple Gs in a transport category airplane, and it’s not the plane’s place to get in the way.

The Boeing philosophy on the pilot being the final say has been pretty standard throughout history in airplanes, but it stands in contrast to the Airbus control system design philosophy, which is that the computers are in control.

If I wanted to continue this bit of discussion, I could cover how a Boeing, even as automation drops out, still flies like an airplane, and an Airbus drops back from behaving a bit like a video game to flying like an airplane as stuff fails, but… it’s not entirely relevant here, and you can read the Air France 447 final accident report if you want the long form.

The MCAS system, operating in the background, is just a very odd bit of equipment for Boeing to put on one of their airplanes.

Data Promotion from Nice to Critical

If you’ve been reading the news lately (or, in my case, people link me to the news), you might also be aware that Boeing sold some of the alerting features as upgrades. Things like, “AoA sensor disagreement alerts.” Some airlines bought them, some didn’t - and, yes, at this point, that sort of thing is safety critical.

But it probably wasn’t always critical. If it was used for stall warning alerts, you can fairly easily override those - and they’re annoying (a stick shaker and eventually a stick pusher), but they’re also not at all subtle. If the stick shaker goes off at 230kt in climb… well, it’s almost certainly not right. Same for the pusher - it’ll certainly get your attention, but (at least on Boeing aircraft), you can just pull right past it until you disable it and maintain control. It’s not the same level of system failure as “I’m going to repeatedly trim nose down until you work it out, or hit the ground.”

For stall warning systems, a single AoA sensor is perfectly fine. For something like MCAS, it’s not - but, MCAS likely just tied into the existing systems, and, again, nobody (apparently) went through an adversarial reasoning process about the thing.

Other Boeing Problems: The 787 Battery (… and, well, the 787)

Boeing, historically, has been a very strong engineering company, run mostly by engineers - and it shows in their old airframes. In the past decade or so, they’ve lost quite a bit of that and have, in my opinion, lost a good bit of what made them Boeing. They’re trying to be good global corporate citizens and all that, which is fine in theory. In reality, it seems like Boeing has lost their engineering-driven approach to reality, and has turned into a subcontractor-wrangler more than a bunch of engineers and fabricators building damned good airplanes.

Nowhere is this better on display than the 787 Dreamliner. Boeing can build them - no question about it. What they don’t seem to be able to do is have a ton of subcontractors building pieces (to spread the wealth around to a bunch of companies around the world), and then assemble them - because the pieces didn’t fit together properly, and carbon fiber is a lot less forgiving than metal. Eventually, Boeing sucked a lot of the construction in-house (or bought out the subcontractors), and then things started working. They should have just skipped the whole mess and built airplanes they way they used to, with Boeing assembly lines, in Boeing facilities, with Boeing engineers upstairs.

And then there’s the battery. Several of them underwent thermal runaway, and if you’re a fan of long reports on lithium battery failures, the NTSB has plenty of good reading for you. The Japan Airlines APU Battery Fire report is a good overview, and the UL Forensic Report really digs into the cells and pack construction.

But, if you’re not going to read those, I’ll summarize for you: In my opinion, that is not an airworthy battery, and it should never have flown as assembled. I’ve not kept up with the issue to know what all Boeing did to fix it, but “Putting a big metal case around it and venting it overboard” (which is part of what they did) doesn’t solve the fact that somewhere in the line of contractors and subcontractors and such, a bad battery design got put into a brand new airplane. Plus, the weight of that housing defeats the purpose of the weight savings of a lithium battery over a NiCD or NiMH battery (both of which are far less prone to fail catastrophically than lithium).

I’m pretty sure I wouldn’t want those cells on an ebike, much less an airplane. Read the linked reports if you want great detail. Yes, they’re that bad.

Boeing really needs to get back to the basics - building good airplanes, and thinking things through.

Vehicle Automation, Autopilot, and Gorelust

If you pay attention to Tesla news, you may have seen that their recent software update brought back an exciting old feature they’d fixed a few revisions ago: The tendency of a Tesla, operating under Autopilot, to pick the worst of all possible options when a lane splits for a left exit. You could go left. You could go right. Or, you could split the difference and aim straight for the concrete divider in the middle, which is definitely not the correct option. If I understand the changelog, it doesn’t mention much beyond the usual “List of new features, oh, yeah, and some bugfixes,” and it certainly doesn’t mention details like “We’ve changed the algorithm for dealing with left exits, pay attention.”

This is the same category of problem as the Boeing system issues - an automatic system that “does something,” in a way that the people in control of the vehicle may or may not be aware of - and that requires quite rapid responses to avoid dying.

The self driving car companies are busy learning, their own way, lessons well learned throughout the history of aviation automation: Humans suck at monitoring systems that work very well for long periods of time, and then require nearly instant action to avoid disaster. You can argue all you want about how that shouldn’t be, but history shows humans are pretty predicable in this situation. If you hand back control to the human to avoid sudden disaster (or, worse, require them to notice that the automation has gone badly wrong all of a sudden), disaster is a likely outcome - though, to be fair, the disaster was “under human control,” so your automation systems don’t look bad if they’re able to detect the situation and hot potato control back.

You either need to keep the human in the loop and attentive, or the automation has to be able to correctly handle just about everything it comes across. I’m a big fan of various driver aids like lane departure warning and emergency braking, but things like autopilot are good enough, enough of the time, that they end up being trusted far, far more than they should be. If the automatic system can’t reliably detect stationary objects in the lane (it can’t), then it shouldn’t be trusted to allow the human to look away. GM’s SuperCruise works pretty well here, from what I understand, because it aggressively ensures the human is paying attention, and it only works on tightly mapped interstates (where it has the map data to reason about an upcoming left exit).

Plus - and this is a big one - when the automation fails, the human should be able to detect it, disconnect it, and take proper corrective action promptly. That’s where Boeing’s MCAS has really screwed up, because at least for the first crash, pilots couldn’t reason about the systems quickly enough. Because they didn’t know about it. We’ll see about the second crash when the reports come out.

Complex Systems and Reasoning About Them

All of this wraps around to one of the main problems with complex systems: They fail, often in ways that weren’t considered, and if a person doesn’t know them well, it’s impossible to reason about them.

Think about your computer (or phone, or tablet, or car…) and the last time it did something unexpected, weird, or otherwise not what you were hoping. Could you figure out what actually went wrong? Or did you just shrug, move on, and/or reboot?

Complex systems tend to fail in unpredictable ways, and depending on how safety critical they are, that can be very, very bad. The history of aviation is filled with complex failures, nuclear reactors are a fascinating set of complex failures, and I fully expect self driving cars to be a history of complex failures. Computers, the internet, and cell phones all fail in complex ways, but they tend to be an awful lot less life-threatening when they fail.

Importantly, complex systems need redundant inputs, and a proper set of sanity checks on the input. With the Boeing case, it looks an awful lot like one bad angle of attack sensor led to a failure that, improperly handled, crashed one and possibly two planes full of people into the ground. Hard.

It seems Boeing is changing the system to use both sensors (and to do nothing if they disagree) - but I still cannot wrap my head around them not doing this in the first place. Yes, pushing the nose over in edge cases is important, but a single sensor shouldn’t be able to cause the trim system to run repeatedly and shove the nose down!

Final Thoughts

So, that wraps up this week’s thoughts on MCAS, automation, and a few other things. Keep your systems simple, and your model of them up to date, and you’ll have a far better chance of reasoning about failures!

Of note, I have the initial plans review submission for home solar done, so hopefully in a month or so I’ll be able to get on with building it. Expect the next few posts to be something solar related!

And, since Google+ is down, feel free to either sign up for email notifications of new posts (in the right column), or, if you’re on Twitter, you can follow my blog there! It’s automatic, so don’t expect me to be posting much, and it doesn’t mean that’s a good way to reach me - but updates are posted there now. Happy flying!

Comments

Comments are handled on my Discourse forum - you'll need to create an account there to post comments.If you've found this post useful, insightful, or informative, why not support me on Ko-fi? And if you'd like to be notified of new posts (I post every two weeks), you can follow my blog via email! Of course, if you like RSS, I support that too.